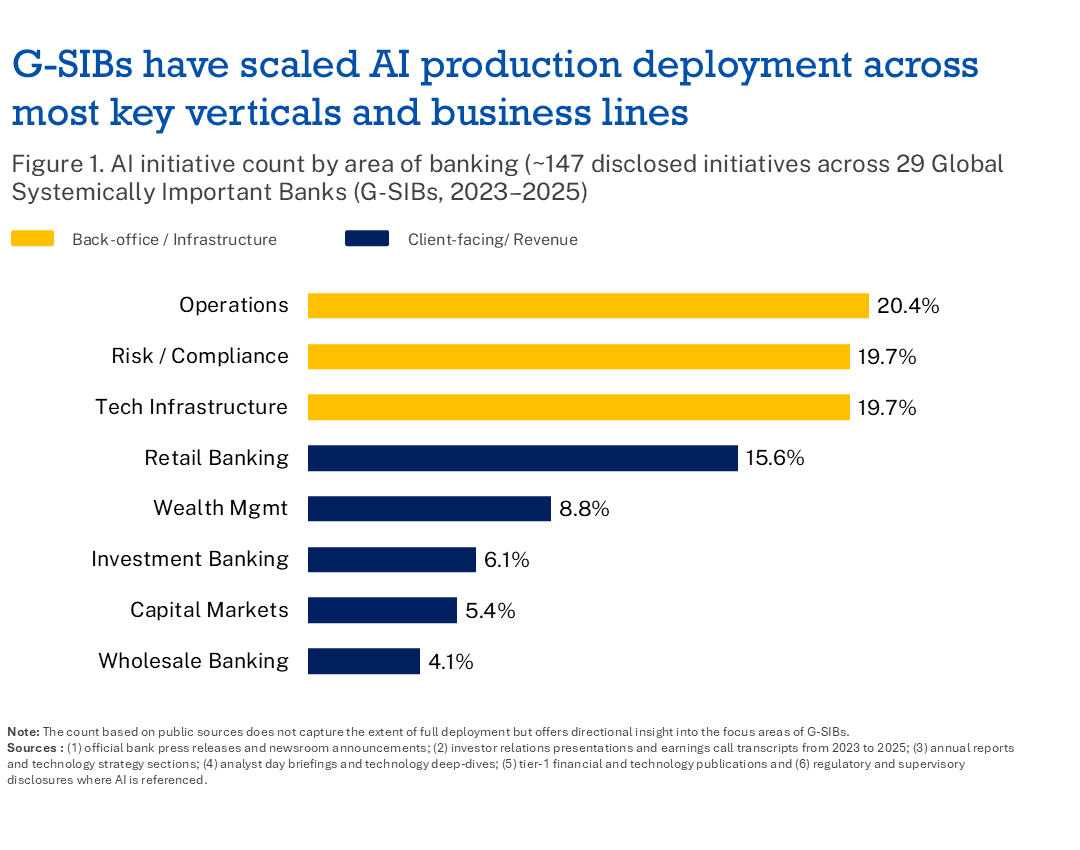

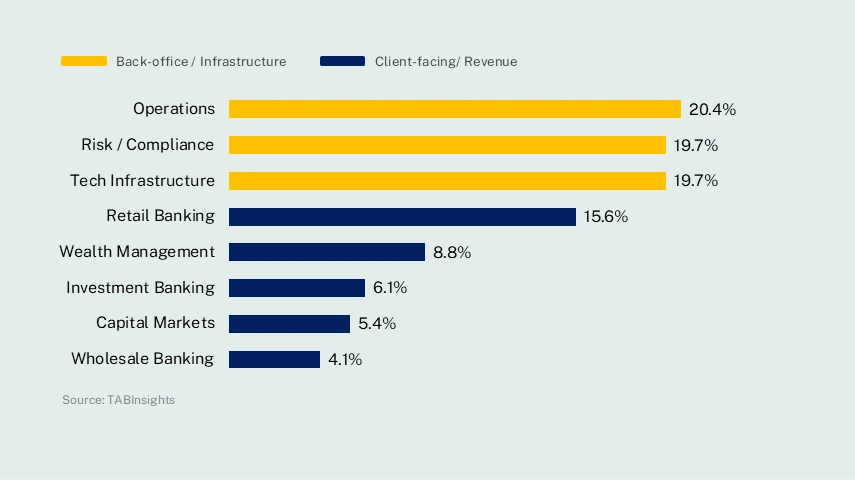

AI adoption across G-SIBs is focused on operational efficiencies, risk and compliance and technology infrastructure accounting for 59% of all initiatives. The most common thread is immediate focus on productivity tools for employees and streamlining internal processes. These banks, however, are keeping an eye on the bigger picture—one that can transform how they operate and conduct business with customers.

Leading G-SIBs have pursued from the outset a platform architecture. They integrate data, models, governance, talent and business accountability into a coherent enterprise operating model, rather than treating AI as a portfolio of isolated initiatives among business and functional areas.

Building a centralised AI orchestration and operating layer, leading G-SIBs achieved faster enterprise scalability than peers. Key to this approach is the implementation of proprietary on-premises AI platforms like JPMorgan Chase’s US LLM Suite, ICBC’s China Zhiyong platform and BNP Paribas (France) ‘LLM-as-a-service’.

This deliberate sequencing of AI investment—infrastructure and platform first, applications second—reflects a strategic approach: sustainable AI deployment requires a governed, unified data foundation and orchestration layer rather than discrete point solutions. As AI technologies continue to mature, G-SIBs will increasingly need to navigate the complexities of agentic AI systems, while being prepared for intensified regulatory scrutiny across global jurisdictions.

AI is reshaping business and operational models rather than automating them

The ongoing transition across G-SIBs represents not a continuation of digital transformation, but a fundamental departure from it. Unlike the previous era, which focused on automating existing processes, banks are now using AI to rebuild (again), shifting automation from process efficiency to a re-designed smart workflow orchestration that drives greater value for customers.

And they are converging on a common architecture: AI factories, model gateways and agent platforms are replacing ad hoc point deployments as the standard foundation. Reusable components—shared model libraries, governed data pipelines, standardised APIs—are enabling them to scale AI across functions with materially greater speed and consistency. Governance is being embedded at the point of model development, deployment and ongoing monitoring, rather than applied retrospectively.

Operating real-time lakehouse architecture defines leaders in this cohort. They integrate stream and batch processing to support real-time customer tagging, fraud monitoring, regulatory reporting and mobile data services, connecting previously siloed source systems into a unified operational foundation. This enables faster fraud detection, more dynamic customer segmentation and more responsive regulatory reporting—all from a single governed data layer.

They build robust governance architecture: rather than deploying AI use cases first and building controls retrospectively, they construct repeatable enterprise operating capabilities—embedding governance, security, knowledge engineering and delivery frameworks before scaling applications.

What distinguishes these architectures from earlier generations of banking technology investment is the deliberate codification of enterprise knowledge—formal and tribal—as a productive asset: structured, governed and deployable rather than a byproduct of operations. This orientation positions these institutions for compounding returns as model capability improves.

AI is no longer confined to back-office support alone. It is moving increasingly into frontline decisioning and value creation, with risk, fraud, compliance and trust emerging as the principal competitive battlegrounds.

AI differentiation is shifting—away from model access (increasingly commoditised) and toward proprietary data control, metadata, lineage governance and institutional knowledge embedded in AI systems. Architecture modernisation is assessed not by legacy metrics of cost or accuracy alone, but by capacity to support AI at enterprise scale.

Mixed-model architectures—such as JPMorgan Chase’s LLM Suite—replace single-provider dependency, reflecting both risk management discipline and the practical reality that no single foundation model optimises across every use case. Centres of excellence and dedicated AI transformation offices are emerging as the coordination layer, ensuring that platform build, regulatory compliance, talent development and business ownership advance in concert.

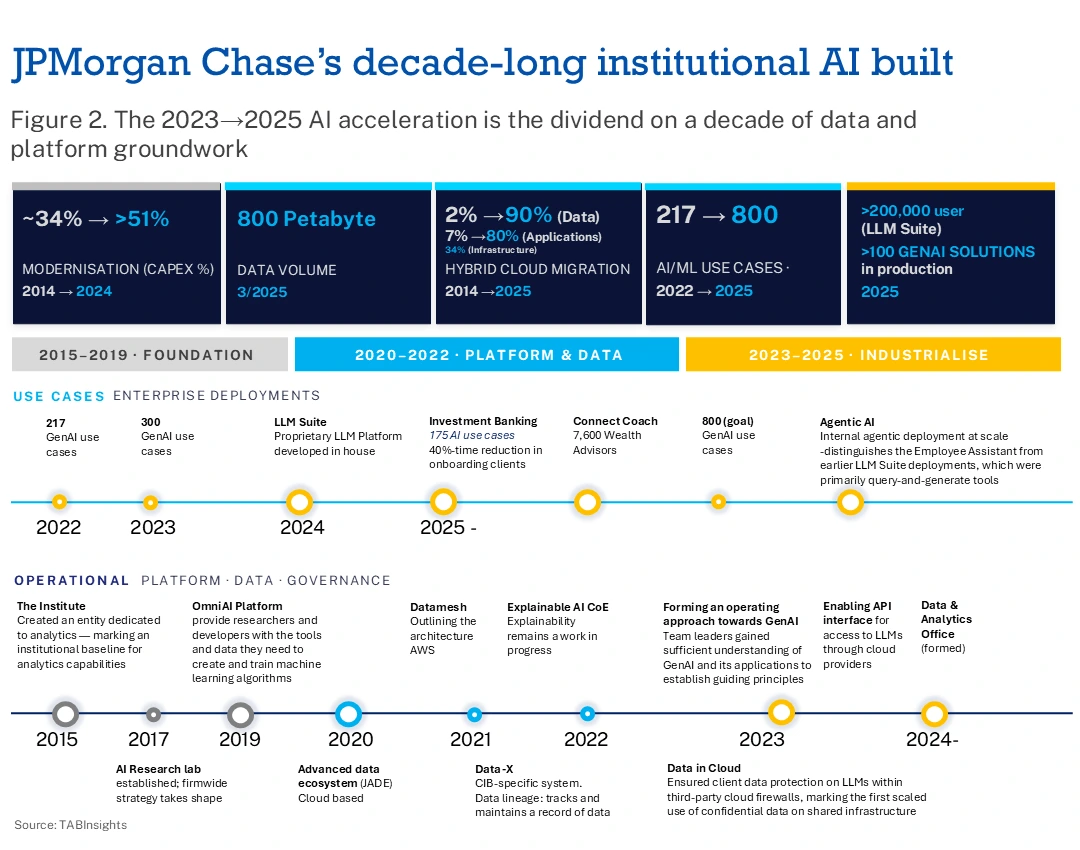

JPMorgan Chase’s decade-long institutional AI build

JPMorgan Chase (JPMC) artificial intelligence programme is not a product of the generative AI moment but the outcome of deliberate, decade-long institutional construction—built in sequence: infrastructure first, then internal productivity, then client-facing application.

The foundation was laid in data infrastructure. JPMC built JADE—its advanced data ecosystem—as the primary data movement and management layer, enabling ingestion, lineage tracking and governance of data across operations in more than 100 countries. At its scale, an exabyte of data moves across the firm daily. Simultaneously, the firm invested approximately two billion dollars in four new private cloud-based data centres and executed systematic migration of applications and workloads to the cloud. By 2025, approximately 80 per cent of applications were running on modern cloud infrastructure, with over 90 per cent of analytical data migrated to public cloud.

On that data foundation, JPMC built OmniAI—its centralised machine learning and AI development platform—to eliminate duplication across business lines, provide governed compute environments for experimentation and embed the security and auditability controls required for regulated financial-grade AI. OmniAI serves as the research and development engine powering the firm’s applied AI capabilities.

The generative AI layer followed. LLM Suite, launched in 2024 and reaching more than 200,000 users within eight months, functions not as a standalone chatbot but as an integrated ecosystem connecting AI to firm-wide data, applications and workflows. Asset and Wealth Management was the first division to deploy generative AI, piloting a copilot tool for its private banking arm in July 2024.

Less visible is the organisational architecture governing this deployment. The leadership structure places four members of JPMC’s 15-person operating committee directly accountable for AI outcomes—the core team: Global CIO Lori Beer, Chief Data and Analytics Officer Teresa Heitsenrether, CEO of Asset and Wealth Management Mary Callahan Erdoes and Chief Risk Officer Ashley Bacon. This is not a technology sub-committee but C-suite accountability embedded at the firm’s highest decision-making level. Heitsenrether’s office, formally institutionalised as the Chief Data and Analytics Office in 2024, oversees data use, governance and controls firmwide and reports directly to CEO Jamie Dimon.

Below this leadership layer, the firm assembled specialist capability through external recruitment. Chief Technology Officer Sri Shivananda joined from PayPal before moving to the CIO role in payments in 2025; CIO of the Chief Data & Analytics office Manoj Sindhwani was recruited from Amazon, where he led Alexa development teams; and CIO of Infrastructure Platforms Darrin Alves came from Amazon’s e-commerce infrastructure group. This reflects a deliberate strategy to bring hyperscaler-grade engineering discipline into a regulated financial institution.

For applied deployment, the firm operates AI squads embedded in every business line—groups of data scientists, engineers and bankers pursuing specific, outcome-oriented problems. Drew Cukor, who left for TWG Global in 2025 as head of AI, led JPMorgan’s AI transformation and integration across business lines, bringing experience from high-risk operational settings. AI research was led for eight years by Manuela Veloso, Head of AI Research and former Carnegie Mellon professor, who recently departed. Her team focused on multi-agent simulation, synthetic data and data discovery. These high-level departures indicate how the JPMC AI playbook is viewed as a roadmap for the wider financial industry.

Risk governance is embedded directly in the technology layer. Hundreds of risk managers were trained in Python—not to write code but to interrogate the inner workings of AI systems and hold engineers accountable for model behaviour. The firm pays particular attention to calibration of self-learning algorithms. JPMorgan Chase & Co deliberately pursues a model-agnostic aggregation strategy, abstracting itself from single-provider dependency to optimise across capabilities, performance and cost as the market evolves.

By 2025, the bank’s own disclosures placed AI-generated value at between one and two billion dollars annually—across credit decisioning, fraud prevention, trading optimisation, customer personalisation and operational efficiency. With a technology budget of approximately $19.8 billion committed for 2026 (up from $17 billion in 2024 and $9 billion in 2014) and a capital expense portion exceeding 51% devoted to transformation, the recent rollout of the Employee Assistant—the bank’s first internally deployed agentic AI system—marks entry into the next phase: from tools that assist to systems that act.

.png)

.webp)